Editor’s note: This article was originally published in February 2017, and was re-posted in February 2021.

Here at Handshake, we deploy our site multiple times a day. Postgres is our primary data store, and since many migration operations are very cheap with Postgres we usually bundle database migration alongside our deploys. For this to be successful and not negatively impact performance during peak usage hours, we follow some simple rules for running migrations in production. For example, a few are:

- Create indexes concurrently.

- Don't create NOT NULL columns in one operation for large tables.

- Don't create columns with default values in large tables.

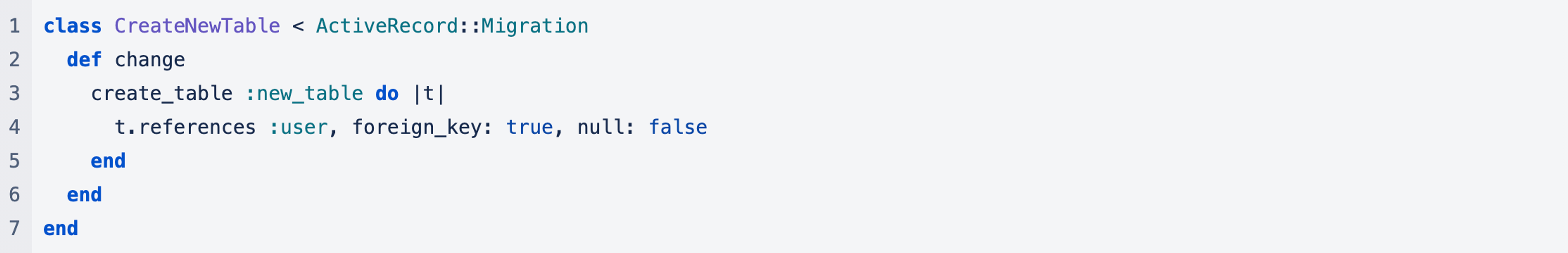

However, a simple migration we ran a couple weeks ago brought the site down for an entire minute. Here's a simple version of it:

Rails Version:

SQL version:

Can you spot the problem?

The problem is adding the foreign key constraint on the new user reference. By default in Postgres, adding a foreign key constraint on a table requires an ACCESS EXCLUSIVE lock on the table the key is referencing. The ACCESS EXCLUSIVE lock in Postgres is the most restrictive type of lock you can acquire. As the docs say:

Only an ACCESS EXCLUSIVE lock blocks a SELECT (without FOR UPDATE/SHARE) statement.

So it blocks everything including SELECT statements on the table. SELECT statements happen to take out an ACCESS SHARE lock on the table, which is the least restrictive lock in Postgres. The only lock that ACCESS SHARE conflicts with is the ACCESS EXCLUSIVE lock.

Normally this isn't a problem. The steps Postgres follows to add this constraint are:

- Acquire ACCESS EXCLUSIVE lock on the users table.

- Validate the integrating of all user_id references in the new table.

- Commit the transaction with the foreign key relationship.

- Release the lock.

Since the new table we're adding is completely empty, the whole process is very quick. The problem comes in the way Postgres hands out locks. Locks are handed out using a queue, so if processes A and B are trying to acquire locks on the same resource, and process A requests the lock first, it will grant the lock to A first even if process B is requesting a less restrictive lock. This is where we hit trouble.

Looking through the database logs from the migration there are many entries of:

LOG: process 31025 still waiting for AccessExclusiveLock on relation 17966 of database

The process waiting is the one running the migration, and relation 17966 corresponds to the users table. You might not think this alone is cause for alarm; perhaps the migration will just take a while. As described above though locks are given in a first come, first served basis. As a result all the ACCESS SHARE locks from all select statements on the users table are blocked.

The next line in the logs reveals everything this is blocking:

DETAIL: Processes holding the lock: 29386. Wait queue: 31025, 30551, 29889, 30168, 29602, 30927, 29598, 30653, 29651, 29667, 29941, 30433, 29785, 30552, 30324, 30456, 30076, 29385, 29430, 29726, 30915, 29847, 31027, 30825, 29544, 31028, 30376, 29662, 29768, 31029, 30865, 31031, 31030, 31032, 31034, 31033, 29596, 31035, 31036, 31037, 31020, 31039, 29771, 30106, 30630, 31040, 30547, 31038, 31042, 31044, 31043, 31045, 31046, 31047, 31041, 31048, 31049, 31051, 31052, 31053, 31054, 31056, 31057, 31055, 31058, 31059, 31060, 31061, 31062, 31063, 31064, 31065, 31067, 31066, 31068, 31069, 31072, 31071, 31070, 31073, 31075, 31074, 31077, 31078, 31079, 31076, 31080, 31082, 31081, 31083, 31084, 31087, 31086, 31085, 31088, 31091, 31092, 31090, 31093, 31095, 31094, 31096, 31098, 31097, 31099, 31101, 31100, 31102, 31103, 31104, 31105, 31107, 31106, 31108, 31022, 31023, 31109, 31110, 31111, ...

What you can see here is that another process, 29386, is blocking the migration from getting its lock on the users table. Digging more through the logs that process corresponded to a long running query for one of our mailers. The long running read blocked the migration, which in turn blocked all reads on the users table.

We ended up aborting the migration and the site went back up.

Prevention

In a perfect world deploys never cause downtime. We do a root-cause analysis for every issue we encounter and change our processes to prevent future downtime. In this case, there are several measures that can prevent issues of this nature.

Long running queries

The real root cause of this issue was that we tried to run a migration while there was a long running query on the users table. Long running queries are usually considered a Bad Idea™, and we regularly try to find long running and slow queries and remove them from the application.

Timeouts

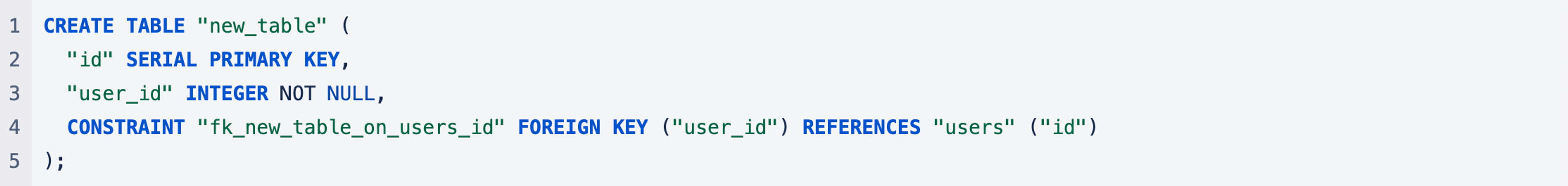

As a backup in case you can't find all potential long running queries, Postgres has two configurable timeouts that would help in this scenario. The first is lock_timeout:

Abort any statement that waits longer than the specified number of milliseconds while attempting to acquire a lock on a table, index, row, or other database object. The time limit applies separately to each lock acquisition attempt. The limit applies both to explicit locking requests (such as LOCK TABLE, or SELECT FOR UPDATE without NOWAIT) and to implicitly-acquired locks. If log_min_error_statement is set to ERROR or lower, the statement that timed out will be logged. A value of zero (the default) turns this off.

The lock_timeout can be set for an individual session, so when adding the foreign key constraint we would instead set the lock_timeout on the connection we have open via:

The other option is statement_timeout, which acts the same as lock_timeout but times out any statement (including lock requests.) In our instance statement_timeout could be set to something reasonable to abort long running queries in the first place.

Check for locks

A way to check for any long held locks before running a migration that requires taking out an ACCESS EXCLUSIVE lock is querying directly against Postgres. At Handshake we're big fans of the pg and pg-extras plugins for the Heroku CLI. Using that you can check for long-held queries by running:

It should be noted that using the pg:locks command in the Heroku CLI wouldn't have worked in this case since that query is restricted to only exclusive locks. You can instead run this query snippet which shows the longest 10 held locks in your database, regardless of lock type:

Running a simple check to make sure no long held locks are open in your database would be a good idea before running migrations that require a high degree of locking (pretty much anything that adds a constraint).

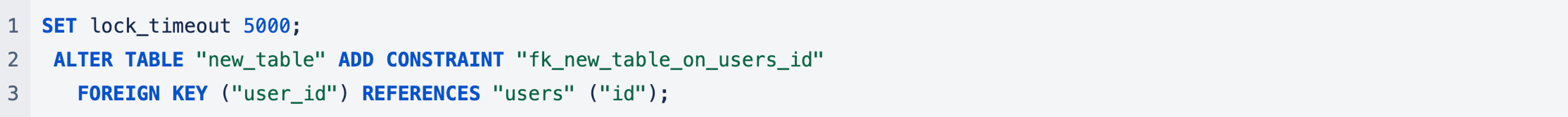

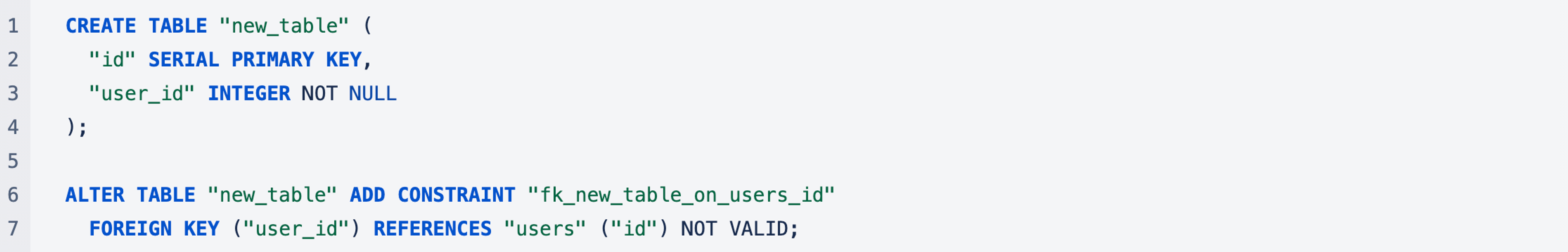

ALTER TABLE NOT VALID

An additional precaution that could be employed for this specific scenario is changing the foreign key constraint to be added with NOT VALID.

When NOT VALID is added to the foreign key constraint "the potentially-lengthy initial check to verify that all rows in the table satisfy the constraint is skipped." That means no EXCLUSIVE ACCESS lock is taken out on the table you're referencing.

Conclusion

Many Postgres migrations, like adding new columns, can be quite cheap and safe to do in production. But as we've seen here, adding constraints requires a higher degree of caution due to the more restrictive locks that need to be taken out. While blocking reads on the users table were certainly surprising to us for such a simple migration, the post-mortem taught us more about the internals of Postgres, and we've fixed many parts of our procedures to prevent such an issue from happening again.

If these types of problems interest you I invite you to apply to one of our Engineering Roles!